Bad QA practices in Software Teams

Some QA processes are inefficient and hamper continuous delivery. Quality should shift left and the role of a QA must be re-examined in some agile teams.

Background

To my dismay, there are still a lot of teams that keep aside capacity in their teams to “do QA” after a story is marked development complete.

By that it usually means that there are some people in the team whose sole responsibility is to (mostly) manually test every story post code-changes.

Why is it bad?

In most cases, the testing methodology in most cases being manual means that it is

- Expensive, because it takes time. It is also repetitive.

- Prone to error, because humans make mistakes.

These are fairly solid reasons to reconsider the practice. Nevertheless, there’s more.

Usually gets in the way of Continuous Delivery

Teams that do a lot of manual testing usually don’t have practices like Continuous Delivery (CD) in their software development toolkit. A long-running manual QA process in the end of a feature hampers the team’s ability to deploy changes to production quickly.

This is a loss for the team, because best practices like CD help your team to move faster, and delight your customers even more.

Accelerate explores this in detail.

Encourages a “throw it over the wall” culture

In teams that have their QA process setup so that there is a separate person(s) to test every completed feature, I have often seen a behavioral pattern that is detrimental to quality software.

This problem is where developers throw untested junk over the metaphorical QA wall expecting someone else to magically figure out all the possible defects.

Not only does this reduce quality, but also impacts the speed of delivery due to frequent back-and-forth between the “developer” and the “QA”.

Causes loss of Early Feedback

We should tweak our feature development process in a manner that we get bad news early.

This allows us to pivot earlier, and thus save time. Significant implementation time can be saved if there are defects in the requirements or even the architecture.

Leaving a QA engineer to fish out the defects, or even come up with a testing-strategy at the very end of the feature development process does not help with that.

How do we change?

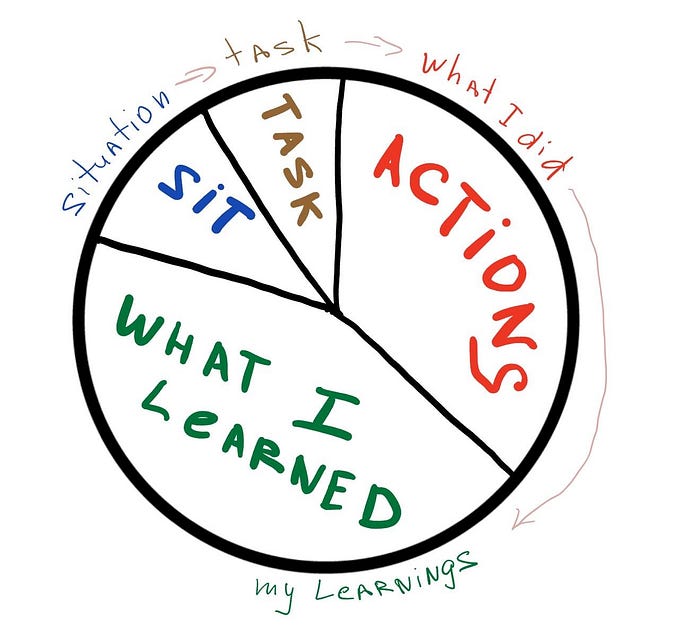

There is a concept called “Shift Left” that I frequently advise my teams to think about.

What this means is that we move certain processes, like testing earlier in the development process. Do not leave it at the end for the said “QA team”.

A good QA is someone who understands this, and tries to push most of the testing metaphorically left, and as part of the development.

This means more

- Unit and Integration tests in the affected service(s)

- Contract tests between services

- Automated Acceptance test(s) that test the feature end-to-end

This changes the game, because it means less effort at the end of a feature towards testing. These kind of tests can also be run as a part of a pipeline in your development pipeline, removing manual effort further.

This is the fundamental concept behind a healthy test-suite, and is what is explored in detail in various posts online about what is known as the Test-Pyramid

Role of the QA

The QA role should be consultative.

A good QA does not have to write the tests mentioned above, but they become enablers for the developers to do this themselves.

Remember, shift left!

In this way, the QA’s job is to ensure that every feature has all the necessary safety nets in place when it gets to production.They do not necessarily have to build the nets themselves!

From my experience

One of my best experiences with the QA role was with an engineer who made sure they did not spend their days clicking around buttons to “test” stories and features.

Instead they spent the time “paving the road” and coaching the developers to incorporate quality into the development process.

This included introducing new practices like the “three amigos” story-kickoff, or coaching the team on contract-testing, and reviewing / pairing on the unit and integration tests that the developers wrote for a feature.

The QA I had worked with even spent time setting up the necessary infrastructure to provide the ability for the team to improve quality, including build good pipelines.

In short, they were an enabler, not someone who stood in the pathway to production, only to cross-check everything the developer had done.

Needless to say, developers then grow more confident of making changes. They were also made to feel responsible for the quality of the product they were delivering.

UI Testing

I thought this warrants a separate section. The knee-jerk reaction to manual testing that I have seen on most teams is that they think they need a grand automated Selenium suite of tests to ensure that the application is working correctly. Often teams bring in dedicated people to do this.

So is it a better option? No.

The reason why you want to minimize the number of automated UI tests is because they can often be flaky, and can also go out of date easily.

Instead, I would always recommend well written unit-tests within the web-application, and contract tests between the web-application and the backend service. These kind of tests are quicker to run and more reliable.

This enables us to have just 1 or two happiest-path scenarios that test the most important customer flow through an automated UI test.

Everything else is covered through unit/integration/contract tests.

In one of my last projects the only UI test we had was to prove that “a customer can order a product from the site and checkout their shopping basket”.

That was the most important customer flow for us and our primary money-maker. It was enough to do this alone.

In Conclusion

A lot of teams need to understand that delivering new features for our customers is better achieved when we are all playing as a team. Also, quality is not an afterthought, but rather something that needs to be baked into the development process.

In most cases, you don’t need to separate the developers and QAs to work behind their own walls. This is ineffective, and wastes time! Make the most effective use of people.

That’s how we create happy teams, who then in-turn will create happy customers.